Xth Sense > Project Meta-Gesture Music

Sketches for body-machine configuration: Corpus Nil

As my PhD draws close to an end this summer, I’m in studio rehearsing a new performance that will be previewed at NIME, the international conference on New Interfaces for Musical Expression, at the end of the month.

I’ve been working together with Baptiste Caramiaux to create Corpus Nil, a new body performance that wraps up the research I’ve done in the past three years combining physiological computing, performance art and cultural studies of the body to examine physical expression in sound performance.

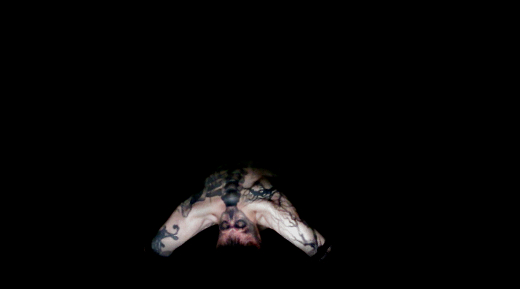

Here are some rehearsal pictures, visual sketches of the final performance.

Corpus Nil is a performance for reorganised body, octophonic surround sound, interactive lights and biowearable musical instrument.

Through a series of movements that explore the limits of muscular tension, limbs torsion, skin friction, and equilibrium the body is reorganised.

As the body morphs, its muscular force and brain activity are transformed into digital sounds and light patterns.

The body and the machine are configured into one entity. Their relation is not one of control, but one of becoming.

Together, the human body and the machine body become a new body.

A body that is not necessarily human nor cyborg, it’s an expressive body of flesh, circuitry, transducers, sound and lights.

Stay tuned for a video teaser to come out soon.

Two new books: body theory, computation and sound in performance

I’m delighted to announce the publication of two books on art and science to which I’ve contributed two different essays. These draw upon my recent doctoral research on corporeality and computation in sound performance. A multidimensional practice-based research which weaves together resources from cultural studies of the body, performing arts and human-computer interaction.

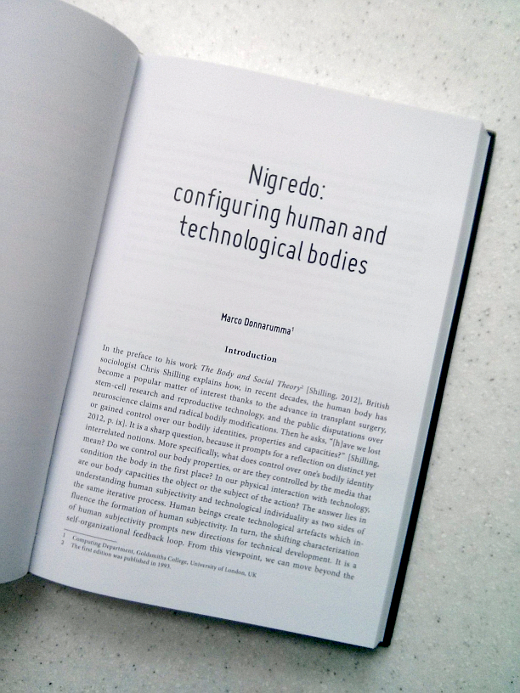

In “Experiencing the Unconventional: Science in Art”, I propose and discuss the notion of “configuration” of human bodies and machines. I do so by linking philosophy of human individuation, body performativity and the use of sound to mediate human physiology with machine circuitry.

“Do we control our body properties, or are they controlled by the media that condition the body in the first place? In our physical interaction with technology, are our body capacities the object or the subject of the action? The answer lies in understanding human subjectivity and technological individuality as two sides of the same iterative process. Human beings create technological artifacts which influence the formation of human subjectivity. In turn, the shifting characterisation of human subjectivity prompts new directions for technical development. It is a self-organisational feedback loop. From this viewpoint, we can move beyond the idea where identity is controlled, and embrace a notion where human subjectivity emerges through the configuration of human bodies with technological bodies. The term ‘configuration’ will be used in this essay to indicate not a mere pairing of machine and human bodies, but the their arrangement in particular forms and for specific purposes.”

Read the full article or buy the book.

In the second book, “Meat, Metal and Code \ Contestable Chimeras – STELARC”, I examine the practice of pioneering body artist Stelarc. By comparing, through the lens of Deleuze philosophy of sensation, the bodies in Francis Bacon’s painting with those in Stelarc’s performances, I elaborate on the notions of fluid flesh and rhythmic skin that link their works.

“According to Deleuze, when Bacon first draws a head and then scrubs the eyes and the mouth with a brush, he deforms the Figure by exerting directional forces on the canvas. The physical movement of the brush is the force that makes the drawing of the head mutate into something else, something that is not a human nor an animal face. This is the moment when the sensation is brought to light. By looking at the amorphous Figure, one can feel the sensation of those forces, their rhythm. Similarly, in Stelarc’s work, the physical tension of the fish hooks, the ropes and the gravitational field are the forces that make the body mutate into something else, a stretched mesh of skin emptied of its flesh and bones. By looking at Stelarc’s suspended body one can feel the sensation of those forces. The body is motionless to the eyes,

but the rhythm of the forces that pull the body downward and upward is clearly expressed by the deformation of the skin. The skin becomes rhythm.”

Full article can be read here, and the book is available at this page.

Enjoy the reading!

Expressivity, Muscle Sensing and Intelligent Machines at CHI 2015

We (the EAVI research group at Goldsmiths, University of London) just got back from the SIGCHI Conference on Computer-Human Interaction in Seoul, Korea. CHI is one of the largest conference in the field, counting this year over 3000 attendees.

The CHI experience is as overwhelming as exciting. With 15 parallel tracks, there’s always something interesting to see and something equally interesting you are going to miss. To add to the thrill, this year the conference was hosted in a massive multipurpose complex, the COEX, which includes a mall, restaurants and other conferences all in the same venue. I leave the rest to your imagination.

My contribution to the conference was twofold. Over the weekend I joined the workshop “Collaborating with Intelligent Machines” and during the week we presented a long paper on our latest research on using bimodal muscle sensing to understand expressive gesture.

Organised by consortium members of the GiantSteps research project, Native Instruments (DE), STEIM (NL) and Dept. of Computational Perception, Johannes Kepler University (AT), the workshop run for a full day and involved several researchers working on embodied musical interaction, music information retrieval and instruments design.

Led by Kristina Andersen, Florian Grote and Peter Knees, we first went through brief presentations of personal research, including a keynote by Byungjun Kwon, then engaged in a brainstorming on the possibilities of future music machines, and finally went on realising (im)possible musical instruments using props, like cardboard, scissors, tape and plastic cups.

Eventually, we closed the workshop discussing the ideas emerged throughout the day, and in the evening we joined local experimental musicians for a sweet concert and some drinks.

On Monday the conference started at full speed. Dodging rivers of attendees, we managed to walk our way into the keynote venue, and started hooking up with colleagues from around the world.

On Thursday, we presented a long paper entitled “Understanding Gesture Expressivity through Muscle Sensing”. The paper, by Baptiste Caramiaux, myself and Atau Tanaka, is actually a journal article which we have published in a recent issue of the Transactions on Computer-Human Interaction (TOCHI).

Our contribution focuses on expressivity as a visceral capacity of the human body. In the article, we argue that to understand what makes a gesture expressive, one needs to consider not only its spatial placement and orientation, but also its dynamics and the mechanisms enacting them.

We start by defining gesture and gesture expressivity, and then present fundamental aspects of muscle activity and ways to capture information through electromyography (EMG) and mechanomyography (MMG). We present pilot studies that inspect the ability of users to control spatial and temporal variations of 2D shapes and that use muscle sensing to assess expressive information in gesture execution beyond space and time.

This leads us to the design of a study that explores the notion of gesture power in terms of control and sensing. Results give insights to interaction designers to go beyond simplistic gestural interaction, towards the design of interactions that draw upon nuances of expressive gesture.

Eventually, we showed a small excerpt from a new performance I’ll be previewing at the upcoming NIME conference in Louisiana (see below, and yes, that’s a sneaky preview!). Here I have implemented the feature extraction system described in the article, modifying and adapting the system to the more fuzzy requirements of a live performance.

The talk was very well received and prompted some interesting questions for future work. Some pointed to the use of our system together with posture recognition systems to enrich user’s input, and others questioned whether subtle tension and force levels can be examined with our methodology. Food for thought!

To conclude, here some personal highlights of the conference:

- “The transfer of learning as HCI similarity: Towards an objective assessment of the sensory-motor basis of naturalness”

- “Advancing muscle-computer interfaces with high-density electromyography”

- “Proprioceptive interaction”

- “From user-centered to adoption-centered design: a case study of an HCI research innovation becoming a product”

- “Collaborative accessibility: How blind and sighted companions co-create accessible home spaces”

- “I’d hide you: Performing live broadcasting in public”

- “As light as your footsteps: Altering walking sounds to change perceived body weight, emotional state and gait”

Useless to say, Seoul was surprising and heartwarming as usual, so… ’til the next time!

Google New Breed: on the commodification of digital art and its young minds

Google is breeding the young minds of the next generation of artists.

Don’t take me wrong, it’s not my opinion, it’s simply what Google states in the marketing campaign (see heading images) that is accompanying the infamous DevArt exhibition at the Barbican in London.

There has been an intense debate in the past weeks on what this powerful curatorial and marketing move by Google actually means. Will it affect or benefit the already unsteady and ephemeral world of digital art, and how? The discussion have rapidly condensed over the web in the form of newspaper articles, artists-led initiatives and discussions on the web through twitter and pastebin statements.

After following the whole issue in a quasi-silence, I felt the need to make a statement and share it with you. I won’t loose more time on a preamble and will get straight to the point.

Let’s start with a curious observation, the term that Google marketing team has chosen for their campaign is “breed”. The first meaning of breed is “to produce offspring, typically in a controlled and organized way”. Quite telling, isn’t it?

To think that largely incorporated entities, such as the Barbican and Google (Google Creative Lab to be precise), are being ingenuous or ignorant or naive and thus, that their initiatives won’t have a relevant impact implies a rather distorted viewpoint.

It’s like staring at a man putting a match to a haystack, and think that nothing bad will happen because the man doesn’t know the haystack will be reduced to hashes. Well, let him do it, you know.

This is an easy way to discard a much deeper problem which outlines some seriously worrying links between digital art curation and its relation to the art establishment and the incorporated lobbies. Links of which most times, we are either unaware of or worst, not interested in.

To bring more arguments to the table, the ones below are some other tips of that monstrous iceberg:

a) the involvement of Sound and Music in another Google-curated open call for emerging sound artist happened earlier this year under very dim lights; note, another intervention in the UK.

b) the massive cultural hijacking project by Google, which they aptly termed, “Google Cultural Institute”. Which, far from being a mere digitisation of museums catalogues, is being used as a means to curate events and open calls which, as for the DevArt, are aimed at breed **young artists** (yes, breed, like animals in captivity), as in the case of the collaboration with Sound and Music above. Young artists does not mean 30-years old emerging artists. Young artists are student, part-time workers that follow their passion and dream of being able to live with their art, or maybe simply being able to express their art. As all of us started.

c) on a slightly different but related note, the boom of Sedition, an online platform designed as an appealing app market for well-packaged and well-known artworks. And I do not mean to take away any of the artistic value of the works sold there. Despite the fact they just opened doors so very recently, they had a stand at this year Sonar+D. Just to exemplify the links already in place.

Now, all of this shows that Google, the Barbican, Sound and Music, and many other entities which we are not aware of yet, have *already established* intimate links to work towards new ways of “curating” (or perhaps “commodifying” is a more accurate term) digital art, sound art, music, etc..

What we see and discuss today is the result of several months, if not years of discussion, planning and agreements, both financial and curatorial.

I don’t think there’s anything we can do to directly disrupt those links, given the scary results they have led to so far, but what one must do is to become aware that this is not a game of capricious millionaires.

Google is one of the richest capital holder in the world, a corporation who owns and develops the best machine learning techniques, who bought the best 6 companies in humanoid robotics, who works with US military defense developing technologies for them, etc. etc.

Stating the obvious here, but sometimes it does not hurt.

If they are investing so much in digital art it is fair to think this is not a caprice but a well-thought and far-reaching business plan. As any of their other businesses.

How do we claim our position in their business plan? Is that what we want?

Or perhaps, can we work towards alternative programs? and how?

The comparison with art patronage across the centuries does not work in this case, it’s just more smoke in the eyes. Renaissance art patrons didn’t have a database of all your documents, pictures, chats, videos, calendar and locations.

p.s. If you wish to respond please send your contribution via email to m [at] marcodonnarumma [dot] com, and I’ll publish it here. Unfortunately I don’t have time to deal with a proper commenting system and the spam issues it brings. Thanks!

Essence: a PhD thesis in less than 300 words

Here it comes, it’s that moment when you have to write your PhD thesis in less than 300 words. You know, it’s one of those awkward yet incredibly helpful challenges that, as a PhD candidate, you better face sometime sooner than later.

You want your research to be understood by anybody with any background in the shortest amount of time possible (I’m talking about seconds here), and you want them to understand it in detail. You want that the exact meaning of each (precious) term you use is enforced by the context you provide. You want to express clearly what is your concern or subject matter and how you will go about it, and that’s all; you don’t want to mention anything you won’t do. You want to preempt any question that may arise in the mind of your readers. You want those readers not to feel the need to check their Facebook timeline for at least a couple of minutes after reading your abstract because they are actually still thinking about what you wrote. Be clear, rigorous, engaging and a little visionary.

As we all know, any advice is more easily suggested than put into practice, you will be my proofreader. So, after two years of research, here it goes. On a second attempt I made it at 247 words.

“The subject of the research is the human body in the live performance of electronic music. By using notions selected from the field of body theory, I discuss the practice of established performers in the field. I look at how they combined human bodies and technological ones and what aesthetic and technical results their practice has led to. In so doing, I develop an argument for the importance to elaborate a model of human-machine interaction that goes beyond a notion of control. A model where the performer’s physical engagement with the instrument is paramount and the relation between the performer and the instrument is one of mutual dependence.

In order to build such a model, I use an interdisciplinary methodology which investigates the physiological and physical qualities of a perfomer’s motion. Namely, I look at the ways in which those qualities can affect the functioning of a digital musical instrument, and vice versa, how the functioning of a digital musical instrument can affect the physiological and physical qualities of a performer’s motion. This theoretical and technical knowledge will be used to develop performances to be exhibited publicly and evaluated through peer-review, so as to feed back into the research process.

This work, it is hoped, will contribute a methodology and a set of computational tools, which on one hand, produce novel ways to understand and design musical expression with body technologies, and on the other, encourage performance practices where human and technological bodies complement each other’s capabilities.”

The core research questions are:

Q1: Can notions from body theory inform the design of digital musical instruments?

Q2: If so, does the performance of live electronic music change and how?

Q3: Can physiological sensing provide an entry point to a performance model that goes beyond control?

If you have comments, please don’t hesitate to write me, I’m eager to discuss things and thoughts.

Francis Bacon’s Last Interview, by Francis Giacobetti, 1991-1992

Francis Giacobetti | 1991 | Meat Triptych

“My painting is not violent; it’s life that is violent… Sexuality, human emotion, everyday life, personal humiliation… violence is part of human nature… Even within the most beautiful landscape, in trees, under the leaves the insects are eating each other; violence is part of life.”

Francis Bacon

My first thought on reading this interview has been that we should take real care of the few artists that are able to reflect about this topic. Because there will be always only few of them, and we need them badly, to remember us about what everybody wants to forget.

Here’s the complete article at Aphelis.net “an iconographic and text archive related to art, communication and technology”.

Taken Apart and Put Together: Human, Machine and Sound Technologies

The following is the introduction of an essay entitled “Taken Apart and Put Together: Human, Machine and Sound Technologies”. The title draws on a passage by Donna Haraway. I’ve just completed the essay for an upcoming book on art science, computation and life. The text is also part of my ongoing research into the combination of body theory and machine learning for biophysical musical instruments and performance strategies. In this sense, it gives a glimpse of the path I’m following. Hope the text can give you some ideas. Enjoy the reading…

UPDATE: Added some useful references at the bottom of the article.

One of the unique characteristics of sound lies in that, among all the energy waves that surround us, acoustic waves actively influence the human body at different levels, from intangible emotion arousal to physical induction of resonances, from pleasure and melancholy, to stillness and dance. From another point of view, for the human being sound is sound only when it is heard. It is in our ears that acoustic waves become a discernible event that we can feel, listen to and define as a sound. Because the experience of sound is intrinsically linked to the human body, the performance of music and the design of musical instrument have, for the most, been grounded in body motion. An obvious example is provided by the centuries-old history of traditional musical instrument making. String, wind and percussion instruments are all based on an interaction model whereby the kinetic energy of the human body excite the body of the instrument which then produces sound. With the arrival of analog synthesisers, the role of the human body in the performance of sound technologies shifted from sourcing acoustic energy to the instrument, to controlling its modulation parameters. Switching knobs, plugging and removing electrical cables, or tapping on a switch became understood as another gesture vocabulary. The idea of the body as a means to control sound technologies was then strengthen by the coming of digital computation, in the form of laptop computers and digital music software. Orchestras of ‘virtual synthesisers’ are operated by simply typing on a keyboard and moving a mouse. The performance of laptop music minimises the impact of the player’s physical effort on sound production in exchange for an easy access to a virtually infinite number of musical parameters and operations. With the increasing accessibility of technologies that gather information on a user’s gestural motion, and with the rise on the market of portable devices, the performance of sound technologies have gone through a re-evaluation of the player’s physical engagement in the production of sound. This is the first core concern of this essay.

How to leverage a player’s physical engagement with sound and computational technologies to enable the creation of new sounds and exciting performance strategies?

In which ways can we move beyond a metaphor of control towards an open-ended and mutual mediation of the player and the instrument?

It would be misleading however to discuss the shifting characterisation of the body in the performance of electronic music without adding to the picture the equally mutable characterisation of the body in the cultural milieu. The human body is cultural and political. It is cultural in that it is the active means by which public cultural artifacts are conceived and made. It is political because it is the subject and object of social strategies involving power, ethics and belief. It follows that the cultural understanding of the human body has important, yet often overlooked, repercussions on the understanding of the player’s body in a public musical performance.

Before the establishment of a dedicated field of studies, the body has been discussed as merely an operational part of the overarching structure of society. This approach has prompted a reductive understanding of the human body that have been rendered most notably through the views of essentialism and social constructivism. The former view, indebted with Descartes philosophy of dualism, sees the human being as an entity split between the higher activity of the mind and the lower fleshly mechanisms of the body. Social constructivism on the other hand, sees human beings as individuals whose subjectivity is constructed through the social making of culture. Thereby social constructivism does not account the body nor the world we live in as means of production of the social, but rather as results of it. Public critical discourses addressing directly the body have first arised from the second feminist wave during the ’60s and the ’70s. The feminist critique has addressed the objectification of the female body by a male-dominated power structure. Specifically, a broad public debate has focused on issues like family, sexuality, workplace conditions, and reproductive rights. Since then, a sociology of the body, or body theory have been established. Sociologists, philosophers, and scientists have investigated the body as the site where and through which identity, gender, religion, and knowledge are nurtured. These perspectives have in turn fostered the cultural analysis of the relation between the machine and the human body. Namely, the humanities have elaborated new definitions of what means to be human in the view of the increasingly intimate integration of the human body and machinic, computational, and biological technologies. This is the second core issue of the present work.

How can we discuss critically the integration of man and machine at a cultural and political level?

How can such understanding inform the way we design technological musical instruments and the related performance strategies?

The term ‘integration’ is used above purposely to indicate not a mere pairing of the machine and the human body, but the intermixing of two things that have been long segregated. The integration of the human and the machine is not intended at a metaphysical level, but at a practical one. Biomedical technologies, or biotechs in short, have provided us an entry point to a still largely unexplored territory where human and technological bodies blend together. DNA cells are used to do computations in test tubes, the entire human genome heritage is stored and categorised in digital database accessible via the Web, artificial organs are used to replace malfunctioning human organs, electrical and mechanical signals produced by physiological processes are channeled through the circuits of robotic prosthesis that enable human beings to restore their bodies. This is the result of a long history of research in the field of biomedical engineering and its branches, which include neural, genetic and tissue engineering, medical implants and prosthetics, and biomedical equipment design. Far from wanting to center this work on biomedical engineering, it will be shown how the knowledge produced in that field has been re-appropriated by performance art practice towards the critical questioning of human physicality and biology.

To summarise, the previous paragraphs have mapped out the broad areas of study that this essay will touch upon, namely a) performance of sound technologies, b) body theory, and c) biomedical engineering. Within those areas we have identified the core point of interest that are i) an open-ended and mutual mediation of a player and the instrument that leverages physical engagement, ii) the cultural and political nuances of the integration of human and machine and its relevance to musical instrument design. In order to elaborate a perspective that embraces such diverse topics we will make use of two key concepts: unfinishedness and biomediation. Unfinishedness relates to the incomplete nature of the human being and the ways such nature make us bound to extend our bodies through technologies. Biomediation refers to the intermix of human and machines at the physical and biological level, and to the modalities whereby this extends the expressive capacity of both the human and the technological bodies. In the remainder of this essay we review the origins and most relevant developments of those two key concepts in philosophy and cultural studies, and then discuss their applications in artistic performance, with a special focus on the role of sound technologies.

Some core references the full essay draws upon:

Bedau, M. and P. Humphreys

2008. Emergence: Contemporary Readings in Philosophy and Science. MIT Press.

Braidotti, R.

2013. The Posthuman. Cambridge, UK, Malden, MA, USA: Polity Press.

Clough, P. T.

2008. The Affective Turn: Political Economy, Biomedia and Bodies. Theory, Culture & Society, 25(1):1–22.

Gray, C. H., H. Figueroa-Sarriera, and S. Mentor

1995. The Cyborg Handbook. New York and London: Routledge.

Haraway, D.

2003. The Companion Species Manifesto: Dogs, People, and Significant Otherness. Prickly Paradigm Press.

Hayles, K. N.

1999. How We Became Posthuman: Virtual Bodies in Cybernetics, Literature, and Informatics. University Of Chicago Press.

Massumi, B.

2002. The Evolutionary Alchemy of Reason. In Parables for the Virtual:

Movement, Affect, Sensation. Durham: Duke University Press.

Maturana, H. R. and F. J. Varela

1980. Autopoiesis and Cognition: The Realization of the Living. Springer.

Mulhall, S.

1996. Heidegger and Being and Time. London and New York: Routledge.

Shilling, C.

2012. The Body and Social Theory. Third Edition. Sage Publications Ltd.

Simondon, G.

1992. The Genesis of the Individual. In Incorporations (Zone 6), J. Crary and S. Kwinter, eds., Pp. 297–319. Zone Books.

Stelarc

2002. Towards a Compliant Coupling: Pneumatic Projects, 1998-2001. In The Cyborg Experiments: The Extension of the Body in the Media Age, Pp. 73–77. Bloomsbury Academic

Tanaka, A.

2011. BioMuse to Bondage: Corporeal Interaction in Performance and Exhibition BioMuse. In Intimacy Across Visceral and Digital Performance, M. Chatzichristodoulou and R. Zerihan, eds., Pp. 1–9. Basingstoke: Palgrave Macmillan.

Thacker, E.

2003. What is Biomedia? Configurations, 11(1):47–79.

Turner, B. S.

1992. Regulating Bodies: Essays in Medical Sociology. Taylor & Francis.

Varela, F. J., E. Rosch, and E. Thompson

1991. The embodied mind: Cognitive Science and Human Experience. MIT Press.

Waisvisz, M.

2006a. Panel Discussion moderated by Michel Waisvisz. Manager or Musician? About virtuosity in live electronic music. In International Conference on New Interfaces for Musical Expression, May, p. 415, Paris, France.

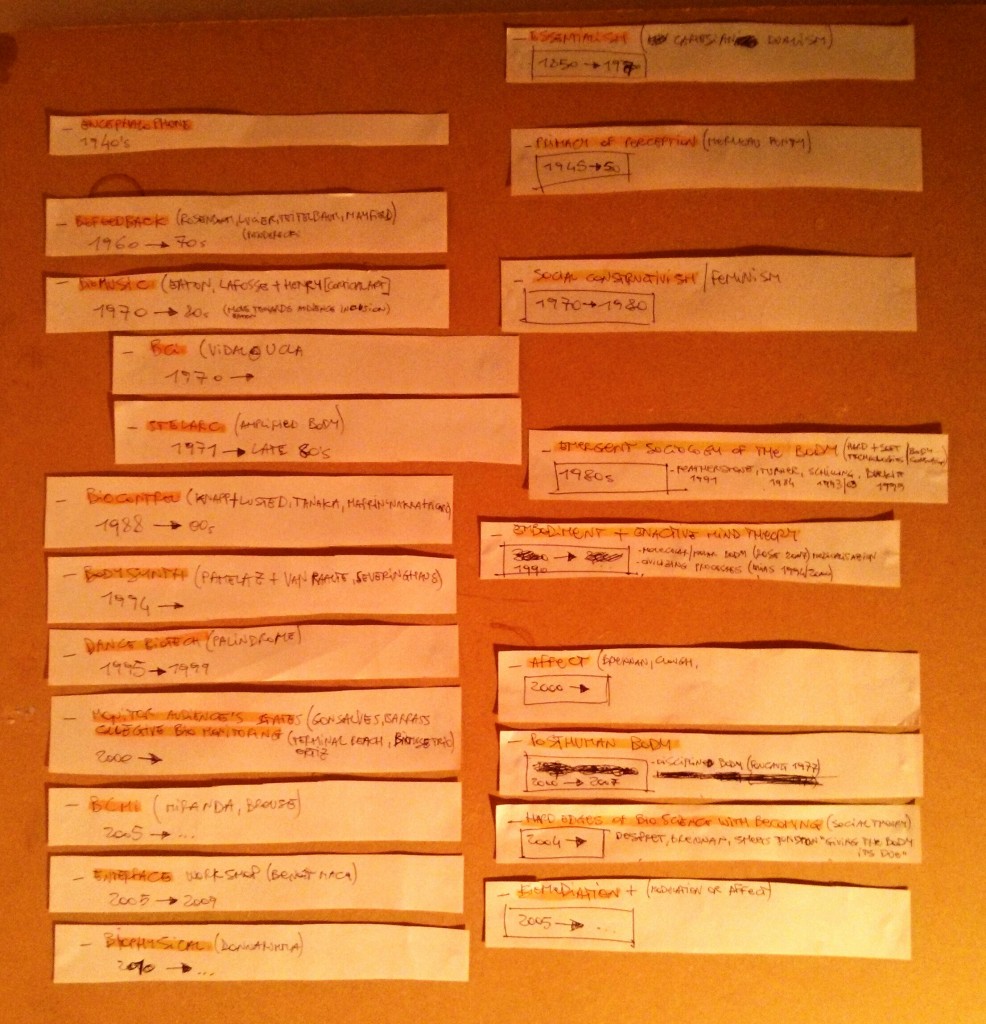

The technological body and the performing arts: a cultural and historical review

In an attempt to map out the origins of the different perspectives on the technological body in the performing arts, I began compiling different timelines, looking at music technology, performance art, sociology of the body, system theory, natural sciences, and history. Now I’m in the process of grasping core theories in those disciplines and contextualising them in the their historical context. The next step is to find links and connections that, by sweeping across disciplines, will reveal the cultural, technological and social modalities by which the body, in the technological performance of art, has formed in the way we know it today. It is a complex research and it is proving difficult to keep focused on the subject matter, yet it is incredibly fascinating to gain a detailed map of this topic, and I’m confident it has the potential to be an interesting contribution both to the community and my own practice.

I’ll be updating the blog with further notes and thoughts as they emerge. Click the image below to enlarge.

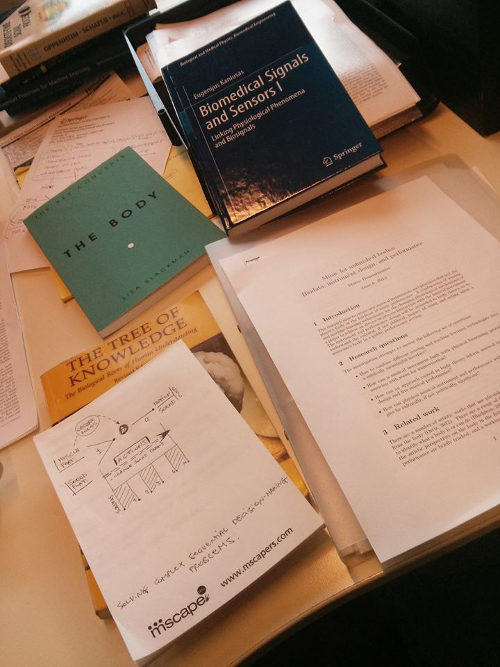

Intro to the politics of physical musical performance

This picture pretty much sums it all up. Given the interest of motion-gesture researchers in using mostly cognitive science to understand sound-making motion, there is room to explore a politically engaged perspective that straight-forwardly address the (player’s) body.

The body is a political entity and body-based music performance produces political messages. A body is political for it touches the aspirations and the “open wounds” of everyone’s cultural and emotional background. Think not only of gender, identity, self-acceptance, who I am, who I want to be, who I pretend I am, but also everyday life as a part of a larger society of bodies. We are bodies that interact with and affect other bodies countless times a day, and together we put forth our world. What a body can do? What multiple bodies can do? How our bodies are modified by the instruments we use? and how this comes down to the design of new musical instruments?

The politics of physical musical performance might not be as evident as for performance art, yet, it is enacted with or without the player will. This is valid for the overall practice of music performance, and is particularly true when it comes to works that augment the body with technology. The question is: How can we make the design of physical musical instrument and performance with (bio)technologies politically significant?

An interesting perspective is forming by drawing upon the notions below. The summer will be the time for me to write a related literature review and thus combine this knowledge into an original form.

effort, a body whose physicality defines and mediates with the musical instrument (Ryan, 1991);

emergence, a body always in process (Massumi, 2002);

enactment, a body that brings forth a world by knowing (Maturana and Varela, 1992);

biomediation, a body reconfigured by media practices that condition its biology (Thacker, 2003).

References:

Maturana, H.R., and Varela, F. J. 1992, The tree of knowledge. Shambala.

Massumi, B. 2002. Parables of the virtual: Movement, affect, sensation. Durham N.C: Duke University Press.

Ryan, J. 1991. Some remarks on musical instrument design at STEIM. Contemporary Music Review, 6(1):3-17.

Thacker, E. 2003. What is Biomedia? Configurations, 11(1):47-49.

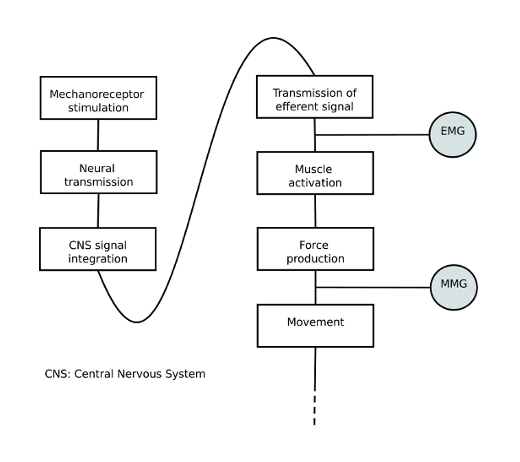

An early musical instrument combining EMG and MMG biosignals

Following our previous study on biophysical and spatial sensing, we narrowed down the focus of our research, and constrained a new study to MMI with 2 biosignals only. Namely, we focused on mechanomyogram (MMG) and electromyogram (EMG) from arm muscle gesture. Although there exists research in New Interfaces for Musical Expression (NIME) focused on each of the signals, to the best of our knowledge, the combination of the two has not been investigated in this field. The following questions initiated this study: In which ways to analyse EMG/MMG for complementary information about gestural input? How can musician control separately the two biosignals? Which are the implications at low level sensorimotor system? And how novices can learn to skillfully control the modalities?

Our interest in conducting a combined study of the two biosignal lies in the fact that they are both produced by muscle contraction, yet report different aspect of the muscle articulation. The EMG is a series of electrical neuron impulses sent by the brain to cause muscle contraction. The MMG is a sound produced by the oscillation of the muscle tissue when it extends and contracts.

In order to learn about differences and similarities of EMG and MMG we looked at the related biomedical literature, and found comparative EMG/MMG studies in the field of sensorimotor system, and kinesis research. We selected aspects of gestural exercise where exists complementary information of MMG/EMG. For the interested reader, further details will be soon available in our related NIME paper.

We used those aspects of muscle contraction to design a small gesture vocabulary to be performed by non-expert players with a bi-modal, biosignal-based interface created for this study. The interface was build by combining two existing separate sensors, namely the Biomuse for the EMG, and the Xth Sense for the MMG signal. Arm bands with EMG and MMG sensors were placed on the forearm. One MMG channel and one EMG channel each were acquired from the users’ dominant arm over the wrist flexors, a muscle group close to the elbow joint that controls finger movement.

In order to train the users with our new musical interface we designed three sound-producing gestures. The EMG and MMG are independently translated into sound, so that every time a user performs one of the gesture, one or the other sound, or a combination of the two, is heard. Users were asked to perform the gestures twice: the first time without any instruction, and the second time with a detailed explanation of how the gesture was supposed to be executed. At the end of the experiment, we interviewed the users about the difficulty of controlling the two sounds. We studied the players’ ability to articulate the two modalities through video and sound recording and analysed their interviews.

The results of the study showed that: 1) MMG/EMG provide richer bandwidth of information on gestural input; 2) MMG/EMG complementary information vary with contraction force, speed, and angle; 3) novices can learn rapidly how to independently control the two modalities.

This findings motivate a further exploration of a MMI approach to biosignal-based musical instruments. Perspective work should look at the further development of our bi-modal interface by designing discrete and continuous multimodal mapping based on EMG/MMG complementary information. Moreover, we should look at custom machine learning methods that could be useful in representing and classifying gesture via muscle combined biodata, and datamining techniques that could help identify meaningful relations among biodata. Such information could then be used to imagine new applications for bodily musical performance, such as an instrument that is aware of its player’s expertise level.

Pictures by Baptiste Caramiaux, and Alessandro Altavilla.