Xth Sense > Sound Design

Workflow improvements, an efficient router for MMG data mapping

Richness of colour and sophistication of form.

How to achieve a seamless interaction with a digital framework for DSP which can guarantee richness and sophistication in real time?

Moreover, the interaction I point at is a purely physical one.

Gesture to sound, this is the basic paradigm which I need to explore.

This is a fairly complex issue, as it requires a multi-modal investigation which encompasses interrelated areas of study. The main key points can be summarized as follow:

- developing a musical aesthetic by which appropriate DSP techniques can be conceived;

- coding relevant audio processing algorithms for muscle sounds;

- analysing and understanding the more appropriate gestures to be performed;

- train my muscles in order to learn how to efficiently execute specific gestures;

- developing a mapping system of kinetic energy to control data.

Drawing from this observations a roadmap for a further implementation of the Xth Sense framework, I found myself struggling with a practical issue: the GUI I was developing did not fit my needs, thus slowing down the whole composition process.

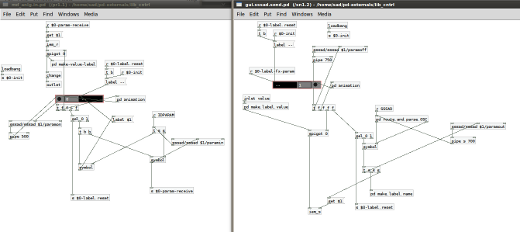

In order to comfortably concentrate on composition and design of the performance I needed an immediate and intuitive routing system, which would allow me to map any of the MMG control values available to any of the DSP parameter controls in use.

Such router would satisfy a twofold mapping: one-to-one and one-to-many.

In Pure Data control parameters can be distributed in several ways, however the main methods are by-hand chords connection and chord-less communication exploiting [send] and [receive], or OSC objects.

Obviously, I first excluded the implementation of a by-hand connection method, as I need to freely perform several muscle contractions in order to test the features extracted by the system in real time. A chord-less method would have better fit my needs, but still it did not satisfy me completely, as I wanted to route the MMG control values around my DSP framework fast and intuitively.

The sssad lib by Frank Barknecht coupled with the send/receiver [iem_s] and [iem_r] from IEMlib eventually stand out as a good solution.

sssad is a very handy and simply implemented abstraction which handles presets saving within Pd by means of a clear message system. [iem_s] and [iem_r] are dynamically settable sender/receiver included in the powerful iemlib by Thomas Musil at IEM, Graz Austria. I coupled them into 2 new macro send/receive abstractions which eventually allowed me to route any MMG control value to any DSP control parameter with 4 clicks (in Run mode).

Click to enlarge.

Four clicks routing:

1st – click a bang to select which source control value has to be routed.

2nd – activate one of the 8 inputs within the router; it will automagically set that port to receive values from the previously selected source

3rd – touch the slider controlling the DSP parameter control you want to address

4th – activate one of the 8 outputs within the router; it will automagically set that port to send values to the selected parameter.

This system works efficiently both for one-to-one and one-to-many data mapping. The implementation of such data mapping system dramatically improved the composition workflow; I can now easily execute gestures and immediately prototype a specific data mapping.

Besides, as you can see from the image above, I included a curve module, which enable the user to change on the fly the curve to be applied to the source value, before it get sent to the DSP framework.

The mapping abstractions are happily embedded in the major sssad preset system, thus all I/O parameters can be safely stored and recalled with a bang.

Heading picture CC licensed by Kaens.

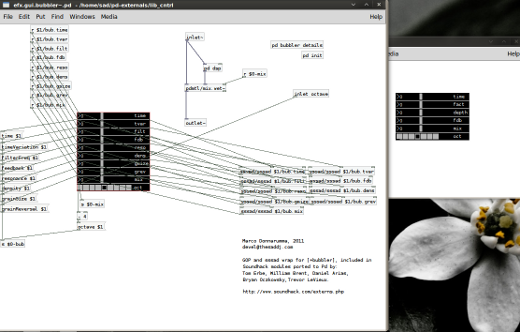

Embedding GOP Soundhack modules

Click to enlarge.

During my exploration of DSP processes for muscle sounds I eventually realized I could make good use of the interesting Soundhack objects, which have been recently ported to Pd by Tom Erbe, William Brent and other developers (see Soundhack Externals).

Among the several modules now available in Pd, I focused my attention on [+bubbler] and [+pitchdelay].

The former is an efficient multi-purpose granulator, while the latter is a powerful pitch-shifting based delay, which allows a functional delay saturation along with octaves control; both work nicely in real time. More interestingly, performance testing showed that the max mean value (here referred to as a continuous event) produced by muscle contractions can be synchronously mapped to the feedback and loop depth of the [+pitchshift~] algorithm in order to obtain an immediately perceivable control over the sound processing.

Sound-gesture obtained through this mapping system are quite effective because the cause and effect interaction between performer’s gesture and sonic outcome is clear and transparent.

Here’s an audio sample. The perceived loudness of the sound and the amount of feedback is directly proportional to the amount of kinetic energy generated by the performer’s contractions.

Moreover, I included in my wrap-up object also sssad capabilities to be able to save presets within the DSP framework I’m developing.

I need to explore further this algorithm and understand further improvements for a real time performance.

Software framework version 0.6.1

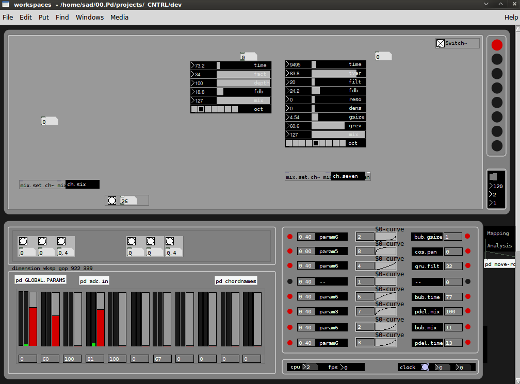

Recently I’ve been dedicating some good time to the software framework in Pure Data. After some early DSP experimentations and the improvements of the MMG sensor I had quite a clear idea about how to proceed further.

The software implementation actually started some time before the MMG research as a fork of C::NTR::L, a free, interactive environment for live media performance based on score following and pitch recognition I developed and publicly released under GPL license last year.

When I started this investigation I thought to start from the point I left last year, so to take advantage of previous experience, methods and ideas.

Click to enlarge.

The graphic layout has been designed using the free, open source software Inkscape and Gimp.

The present interface consists of a workspace in which the user can dynamically load, connect and remove several audio processing modules (top); a sidebar which enables to switch among 8 different workspaces (top right); some empty space that will be reserved to utilities modules, such as a timebase and monitoring modules (middle); a channel strip to control each workspace volume and send amount (bottom); a squared area used to load diverse modules such as the routing panel that you can see in the image (mid to bottom right). Modules and panels are dynamic, which means they can be moved and substituted dynamically in a click for a fast and efficient prototyping.

Until now several audio processing modules have been implemented:

- a feedback delay

- a chorus

- a timestretch object (for compression and expansion) based on looped sampling

- a single side band modulation object (thanks to Andy Farnell for the tip about the efficiency of ssb modulation compared with tape modulation)

- what I called a grunger, namely a module consisting of a chain of reverb, distortion, bandpass filter and ssb pitch shifting (have to thanks my supervisor Martin Parker for the insight about shiftpitching the wet signal of the reverb)

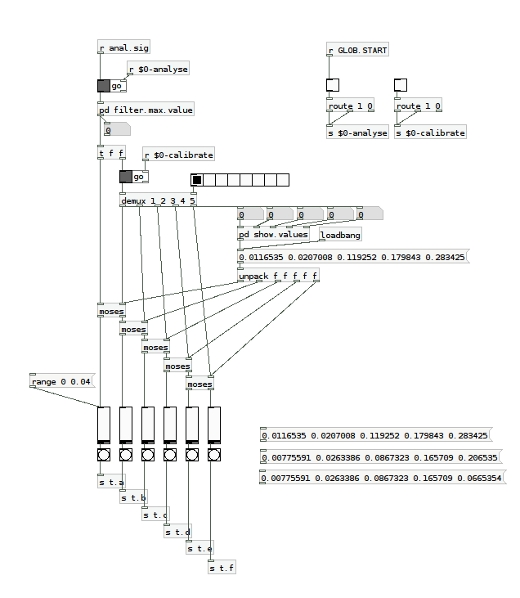

Another interesting process I could implement was a calibration system; it enables the performer to calibrate software parameters according to the different intensity of the contractions of each finger, the whole hand or the forearm (by now the MMG sensor has been tested for performance only on the forearm).

Such process is being extremely useful as it allows the performer to customize the responsiveness of the hardware/software framework, and to generate up to 5 different control data contracting each finger, the hand or the whole forearm.

The calibration code is a little rough, but it does work already. I believe exploring further this method can unveil exciting prospects.

MMG sensor early calibration system | 2010

On the 7th December I’m going to present the actual state of the inquiry to the research staff of my department at Edinburgh University; I will present a short piece using the software above and the MMG sensing device. On the 8th we also arranged an informal concert at our school in Alison House, so I’m looking forward to test live the work done so far.

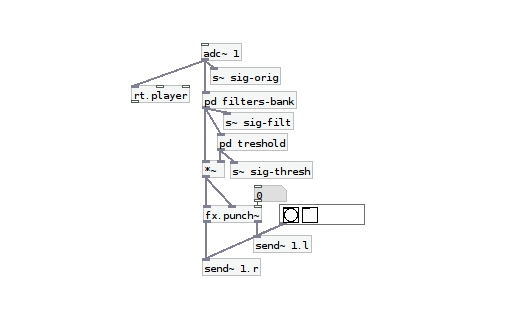

Pure Data MMG signal processing system early alpha

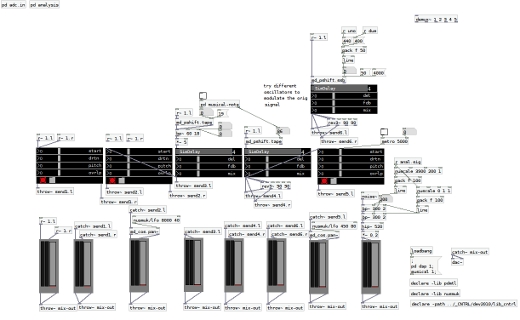

The hardware prototype has almost reached a good degree of stability and efficiency, so I’m now dedicating much more time to the development of the MMG signal processing system in Pure Data. How do I want the body to sound?

First, I coded a real time granulator and some simple delay lines to be applied to the MMG audio signal captured from the body.

Then I added a rough channel strip to manage up to 5 different processing chains.

Excuse the messy patching style, but this is just an early exploration…

Click to enlarge.

However, once I started playing around with it, I soon realized that the original MMG signal coming from the hardware needed to be “cleaned up” before being actually useful. That’s why I added a subpatch dedicated to the filtering of unneeded frequencies and enhancement of the meaningful ones; at the same time I thought about a threshold process, which could enable Pure Data to understand whether the incoming signal is actually generated by voluntary muscle contractions or it’s a non-voluntary movement or a background noise. This way gesture results far more interrelated to the processed sound.

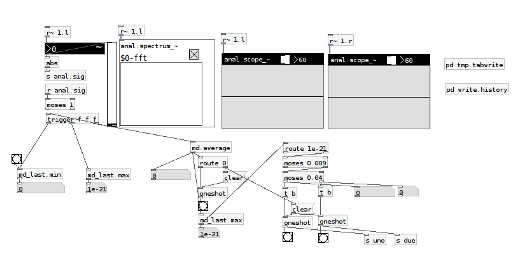

Eventually, I needed a quick and reliable visual feedback to help me analysing the MMG signal in real time. This subpatch includes a FFT spectrum analysis module and a simple real time spectrogram borrowed from the PDMTL lib.

Click to enlarge.

I’m going to experiment with such system and when it will reach a good degree of expressiveness I’ll record some live audio and post it here.

Listening to the body, first actual muscles sounds

I’m experimenting with a prototypical bio-sensing device (described here) I built with the precious help of Dorkbot ALBA at the Edinburgh Hacklab. Although at the moment sensor is quite rough it is already capable of capturing muscles sounds.

As the use of free, open source tools is an integral part of the methodology of this research, I’m currently using the awesome Ardour2 to monitor, record and analyse muscles sounds.

The first problem I encountered was the conductive capability of human skin; when the metal case of the microphone directly touches the skin, body becomes a huge antenna attracting all electromagnetic waves floating around. I’m now trying different ways of shielding the sensor and this helps me to better understand how muscle vibrations are transmitted outside of the body and through the air.

Below you can listen to a couple of short clips of my heartbeat and arm voluntary contractions recorded with the MMG sensor. Audio files are raw, i.e. no processing has been applied to the original sound, their frequency is extremely low and it might not be immediately audible; you will possibly need to turn up the volume of your speakers or wear a pair of headphones.

Heartbeat

Voluntary arm contractions

(sound like a low rumble, or a far thunder; clicks are caused by crackles of my bones)

At this early development stage the MMG sensor capabilities seem quite promising, I can’t wait to plug the sensor into Pure Data and start trying some real time processing.

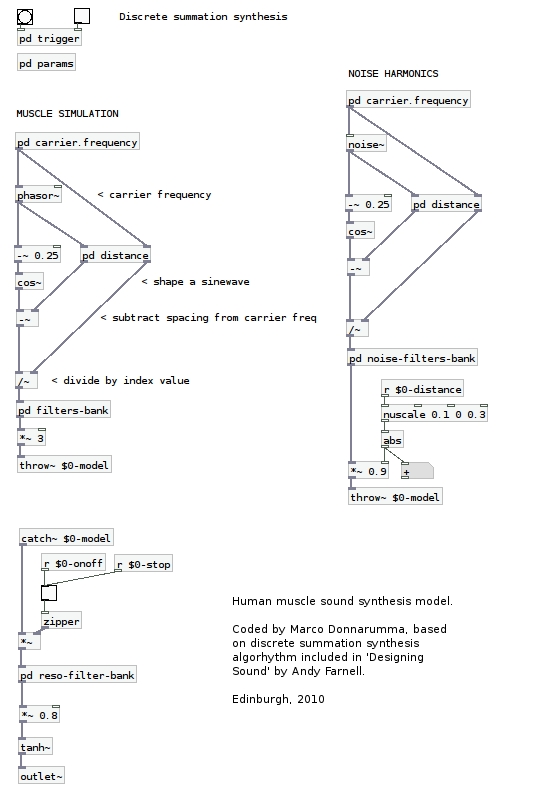

An audio synthesis model for muscles sounds

While waiting for the components I need to build the bio-sensing device, I started coding a synthesis model for human muscle sounds.

I illustrate below the basis of an exploratory study of the acoustics of biologic body sounds. I describe the development of an audio synthesis model for muscle sounds which offers a deeper understanding of the body sound matter and provides the ground for further experimentations in composition.

I’m working with a machine running a Linux OS and the audio synthesis model is being implemented using the free, open source framework known as Pure Data, a graphical programming language developed by Miller Puckette. I’ve been using Pd for 4/5 years for other personal projects, thus I feel fairly comfortable working in such environment.

Firstly, it is necessary to understand the physical phenomena which makes muscle vibrate and sound.

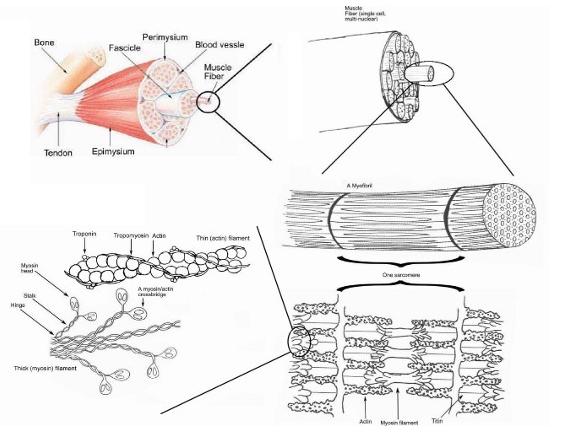

As illustrated in a previous blog post, muscles are formed by several layers of contractile filaments. Each of them can stretch and move past the other, vibrating at a very low frequency. However audio recordings of muscle sounds show that their sonic response is not constant, but sounds more similar to a low and deep rumble. This might happen because each filament does not vibrate in unison with each other, but rather each one of them undergoes slightly different forces depending on their position and dimension, therefore each filament vibrates at a different frequency.

Eventually each partial (defined here as the single frequency of a specific filament) is summed to the others living in the same muscle fiber, which in turn are summed to the other muscle fibers living in the surrounding fascicle.

Such phenomena creates a subtle, complex audio spectra which can be synthesised using Discrete Summation Formula. DSF allows the synthesis of harmonic and inharmonic, band-limited or unlimited spectra, and can be controlled by an index, which seems perfectly fitting the requirement of this acoustic experiment.

Currently I’m studying the awesome book “Designing Sound” by Andy Farnell (MIT Press) and the synthesis model I’m coding is based on the Discrete Summation Synthesis explained in Technique, chapter 17, pp. 254-256.

I started implementing the basic synthesis formula to create the fundamental sidebands, then I applied DSF to a noise generator to add some light distortion to the sinewaves by means of complex spectra formed by tiny, slow noise bursts. Filter banks have been applied to each spectra in order to refine the sound and emphasise specific harmonics.

Eventually the two layers have been summed, passed through another filter bank and a tanh function, which add a more natural characteristic to the resulting impulse.

Below a screenshot of the Pd abstraction.

Next, by applying several audio processing techniques to this model I hope to become more familiar with its physical composition and to develop the basis for a composition and design methodology of muscle sounds, which will be then employed with the actual sounds of my body.

How human muscles sound

According to Wikipedia “Muscle (from Latin musculus, diminutive of mus “mouse”) is the contractile tissue of animals… Muscle cells contain contractile filaments that move past each other and change the size of the cell. They are classified as skeletal, cardiac, or smooth muscles. Their function is to produce force and cause motion. Muscles can cause either locomotion of the organism itself or movement of internal organs.”

Top down view of skeletal muscle | Photo montage created by Raul654 | Wikimedia

What is not mentioned here is that the force produced by muscles causes sound too.

When filaments move and stretch they actually vibrate, therefore they create sound. Muscle sounds have a frequency between 5Hz and 45Hz, thus they can be captured with a highly sensitive microphone.

A sample of sounding muscle is available here, thanks to the Open Prosthetics research group (whose banner reads “Prosthetics shouldn’t cost an arm and a leg”).

In fact muscle sounds have mostly been studied in the field of Biomedical Engineering as alternative control data for low cost, open source prosthetics applications and it’s thanks to this studies that I could gather precious technical information and learn about several designs of muscles sounds sensor devices.

Most notably the work of Jorge Silva at Prism Lab is being fundamental for my research. His MASc thesis represents a comprehensive resource of information and technical insights.

The device designed at Prism Lab is a coupled microphone-accelerometer sensor capable of capturing the audio signal of muscles sounds. It also eliminates noises and interferences in order to precisely capture voluntary muscle contraption data.

This technology is called mechanical myography (MMG) and it represents the basis of my further musical and performative experimentations with the sounding (human) body.

I just ordered components to start implementing the sensor device, so hopefully in a week or two I’ll be able to hear my body resonating.