Xth Sense > Computation

Expressivity, Muscle Sensing and Intelligent Machines at CHI 2015

We (the EAVI research group at Goldsmiths, University of London) just got back from the SIGCHI Conference on Computer-Human Interaction in Seoul, Korea. CHI is one of the largest conference in the field, counting this year over 3000 attendees.

The CHI experience is as overwhelming as exciting. With 15 parallel tracks, there’s always something interesting to see and something equally interesting you are going to miss. To add to the thrill, this year the conference was hosted in a massive multipurpose complex, the COEX, which includes a mall, restaurants and other conferences all in the same venue. I leave the rest to your imagination.

My contribution to the conference was twofold. Over the weekend I joined the workshop “Collaborating with Intelligent Machines” and during the week we presented a long paper on our latest research on using bimodal muscle sensing to understand expressive gesture.

Organised by consortium members of the GiantSteps research project, Native Instruments (DE), STEIM (NL) and Dept. of Computational Perception, Johannes Kepler University (AT), the workshop run for a full day and involved several researchers working on embodied musical interaction, music information retrieval and instruments design.

Led by Kristina Andersen, Florian Grote and Peter Knees, we first went through brief presentations of personal research, including a keynote by Byungjun Kwon, then engaged in a brainstorming on the possibilities of future music machines, and finally went on realising (im)possible musical instruments using props, like cardboard, scissors, tape and plastic cups.

Eventually, we closed the workshop discussing the ideas emerged throughout the day, and in the evening we joined local experimental musicians for a sweet concert and some drinks.

On Monday the conference started at full speed. Dodging rivers of attendees, we managed to walk our way into the keynote venue, and started hooking up with colleagues from around the world.

On Thursday, we presented a long paper entitled “Understanding Gesture Expressivity through Muscle Sensing”. The paper, by Baptiste Caramiaux, myself and Atau Tanaka, is actually a journal article which we have published in a recent issue of the Transactions on Computer-Human Interaction (TOCHI).

Our contribution focuses on expressivity as a visceral capacity of the human body. In the article, we argue that to understand what makes a gesture expressive, one needs to consider not only its spatial placement and orientation, but also its dynamics and the mechanisms enacting them.

We start by defining gesture and gesture expressivity, and then present fundamental aspects of muscle activity and ways to capture information through electromyography (EMG) and mechanomyography (MMG). We present pilot studies that inspect the ability of users to control spatial and temporal variations of 2D shapes and that use muscle sensing to assess expressive information in gesture execution beyond space and time.

This leads us to the design of a study that explores the notion of gesture power in terms of control and sensing. Results give insights to interaction designers to go beyond simplistic gestural interaction, towards the design of interactions that draw upon nuances of expressive gesture.

Eventually, we showed a small excerpt from a new performance I’ll be previewing at the upcoming NIME conference in Louisiana (see below, and yes, that’s a sneaky preview!). Here I have implemented the feature extraction system described in the article, modifying and adapting the system to the more fuzzy requirements of a live performance.

The talk was very well received and prompted some interesting questions for future work. Some pointed to the use of our system together with posture recognition systems to enrich user’s input, and others questioned whether subtle tension and force levels can be examined with our methodology. Food for thought!

To conclude, here some personal highlights of the conference:

- “The transfer of learning as HCI similarity: Towards an objective assessment of the sensory-motor basis of naturalness”

- “Advancing muscle-computer interfaces with high-density electromyography”

- “Proprioceptive interaction”

- “From user-centered to adoption-centered design: a case study of an HCI research innovation becoming a product”

- “Collaborative accessibility: How blind and sighted companions co-create accessible home spaces”

- “I’d hide you: Performing live broadcasting in public”

- “As light as your footsteps: Altering walking sounds to change perceived body weight, emotional state and gait”

Useless to say, Seoul was surprising and heartwarming as usual, so… ’til the next time!

Combining biophysical and spatial modalities for musical expression

I kicked off my PhD studies by extending the Xth Sense, a new biophysical musical instrument, based on a muscle sound sensor I developed, with spatial and inertial sensors, namely whole-body motion capture (mocap) and accelerometer. The aim: to understand the potential of combined sensor data towards a multimodal control of new musical interface.

An excerpt from our related paper:

“In the field of New Interfaces for Musical Expression (NIME), sensor-based systems capture gesture in live musical performance. In contrast with studio-based music composition, NIME (which began as a workshop at CHI 2001) focuses on real-time performance. Early examples of interactive musical instrument performance that pre-date the NIME conference include the work of Michel Waisvisz and his instrument, The Hands, a set of augmented gloves which captures data from accelerometers, buttons, mercury orientation sensors, and ultrasound distance sensors (developed at STEIM). The use of multiple sensors on one instrument points to complementary modes of interaction with an instrument. However these NIME instruments have for the most part not been developed or studied explicitly from a multimodal interaction perspective.”

Together with team colleagues Baptiste Caramiaux and Atau Tanaka, we designed a study of bodily musical gesture. We recorded and observed the sound-gesture vocabulary of my performance entitled Music for Flesh II.

The data recorded from the gesture were 2 mechanomyogram signals (MMG or muscle sound), mocap data, one 3D vector from the accelerometer, and 3D positions and quaternions of my limbs.

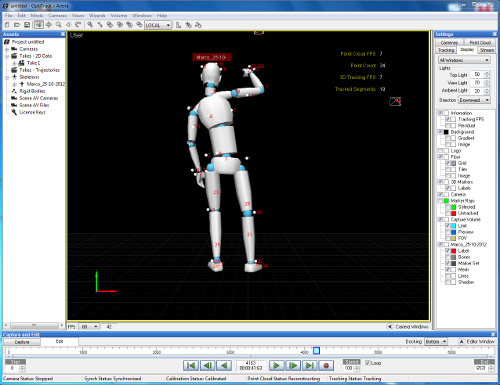

My virtual body performing Music for Flesh II, as seen by the motion capture system.

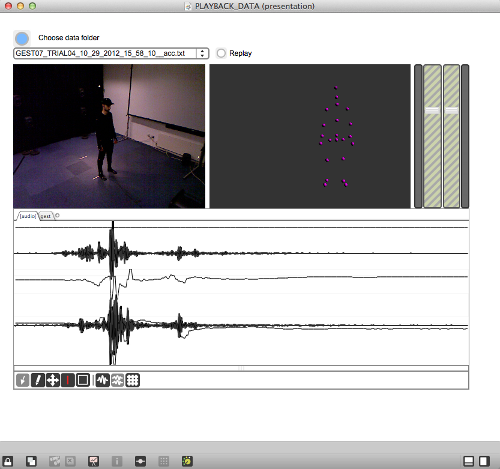

We created a public multimodal dataset and performed a qualitative analysis of those data. By using a custom patch to visualise and compare the different types of data (pictured below) we were able to observe complementarity of different forms in the information collected. We noted 3 types of complementarity: synchronicity, coupling, and correlation. You can find the details of our findings in the related Work in Progress paper, published for the recent TEI conference on Tangible, Embedded, and Embodied Interaction in Barcelona, Spain.

The software we developed to visualise the multimodal dataset.

To summarise, our findings show that different type of sensor data do have complementary aspects; these might depend on the type and sensitivity of sensor, and on the complexity of the gesture. Besides, what might seem a single gesture can be segmented into sections that present different kind of complementarity among the different modalities. This points to the possibility for a performer to engage with richer control of musical interfaces by training on a multimodal control of different types of sensing device; that is, the gestural and biophysical control of musical interfaces based on a combined analysis of different sensor data.