Sketches: representing biological gesture for musical corporeal interaction

Some sketches and notes I am currently working on together with Baptiste Caramiaux and Atau Tanaka towards the creation of a corporeal musical space generated by biological gesture, that is, the complex behaviour of different biosignals during performance.

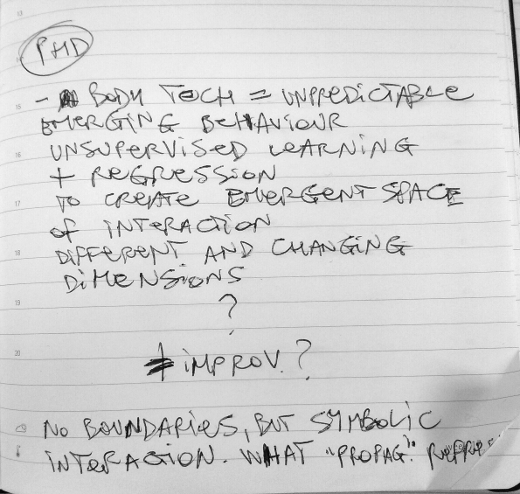

General questions: why to use different biosignal in a multimodal musical instrument? How to meaningfully deploy machine learning algorithms for improvised music?

Machine learning (ML) methods in music are generally used to recognise pre-determined gestures. The risk in this case is that a performer ends up being concerned about performing gestures in a way that allows the computer to understand them. On the other hand, ML methods could be possibly use to represent an emergent space of interaction, that would allow freedom of expression to the performer. This space shall not be defined beforehand, but rather created and altered dynamically according to any gesture.

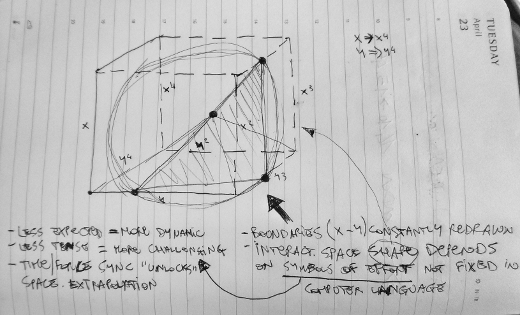

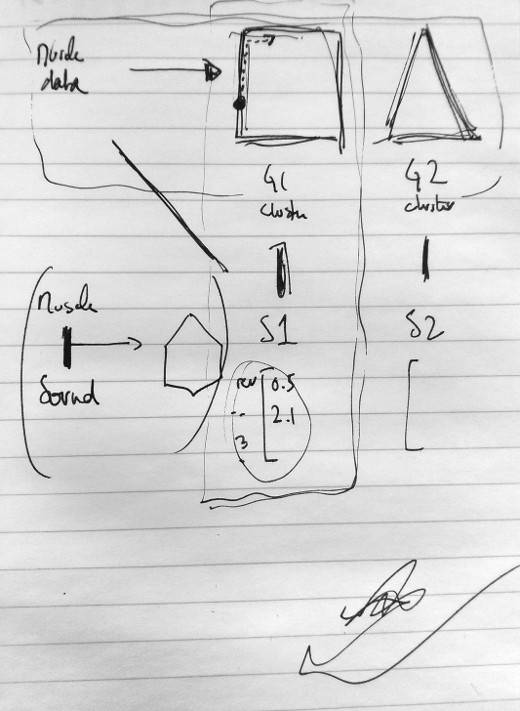

An unsupervised ML method shall represent in real time complementary information of the EMG/MMG signals. The output shall be rendered as an axis of the space of interaction (x, y, …). As the relations between the two biosignals change, the amount of axes and their orientation change as well. The space of interaction is constantly redefined and constructed. The aim is to perform the body as an expressive process, and let to the machine the duty of representing this process by extracting information that would not be understood without the aid of computing devices.

Each kind of gesture shall be represented within a specific interaction space. The performer would then control sonic forms by moving within, and outside of, the space. The ways in which the performer travel through the interaction space shall be defined by a continuous function derived by the gesture-sound interaction.