The Xth Sense™ (2010-14) is a free and open biophysical technology. With it you can produce music with the sound of your body. The Xth Sense captures sounds from heart, blood and muscles and uses them to integrate the human body with a digital interactive system for sound and video production. In 2012, it was named the “world’s most innovative new musical instrument” and awarded the first prize in the Margaret Guthman New Musical Instrument Competition by the Georgia Tech Center for Music Technology (US). Today, the Xth Sense is used by a steadily growing community of creatives, ranging from performing artists and musicians, to researchers in physiotherapy and prosthetics, and universities and students in diverse fields.

Check the video below to see what the Xth Sense can do!

And by the way, its name is spelled ecsth sense, not tenth sense… 😛

You can build an Xth Sense and learn how to use it either on your own by checking the documentation here, or by taking part in one of the regular workshops taught wordlwide by the Xth Sense, creator Marco Donnarumma. If you wish are an organisation and wish to host a workshop, please get in touch.

Ominous | Incarnated sound sculpture (Xth Sense) from Marco Donnarumma on Vimeo.

The XS amplifies the muscle sounds of the human body, and use them as control data and musical material.

When a performer contracts any muscle, low frequency sound are produced (technically called mechanomyogram or MMG). By capturing these sounds with a microphone sensor embedded in the XS armband, and live sampling them with a computer you get music in real time. It’s like connecting a guitar to an effect pedal; in this case you connect your body to a computer. You have complete control over the shape of the sounds by simply contracting your muscles in different ways. For the tech-savy out there, more detailed information can be found in this list of publications.

The software driving the system is written in Pure Data aka Pd. It comes with a user-friendly interface, features extraction, global preset saving, and many other features. The software can be used as a stand-alone Pd patch, or it can communicate with other software, robot or light system via the MIDI or OSC protocols. The code is open source (released under a GPL v3.0 license).

The XS biosensor is designed to be built by anyone. It can be built manually from scratch. The design is documented and freely available under a Creative Commons Share-Alike license.

The XS is a free and open project started by individuals and kept evolving by a worldwide community. You can build your own Xth Sense biophysical sensors from scratch.

Building the wearable sensor is very easy (you need to solder literally 4 components) so it requires only a basic knowledge of soldering.

The schematic, the parts list, and a tutorial are available below. Building an XS sensor by hand takes between 1 or 4 hours, depending on your skill and your tools. Try to work with the right tools; it is usually very easy to find them in an electronics shop in your town.

Xth Sense, biophysical, wearable sensor.

Copyright (C) 2011 Marco Donnarumma

The Xth Sense Wearable Sensor (“The work”) is provided under the terms of this Creative (“CCPL” OR “LICENSE”). The work is protected by copyright and/or other applicable law. Any use of the work other than as authorized under this license or copyright law is prohibited.

You are free (and encouraged) to use, modify, extend the Xth Sense Wearable Sensor; however, if you wish to distribute the result of your modification, or any other hardware that depends on the Xth Sense Wearable Sensor you have to distribute it under the same license, and to include the mention of the original copyright. See the Creative Commons Public License for more details.

Introducing the Xth Sense (XS): What is it and what it can be used for.

Musical applications: How can you make music with it.

Other applications: What else you can do with it.

Framework: Description of the XS software environment.

Step by step tutorial: How to install the XS software, connect the wearable sensors and start making noise.

Troubleshooting: Some solutions to the most common issues.

Xth Sense and its documentation are copyright of Marco Donnarumma, and are licensed under a Creative Commons License. Click here for details.

Introduction

The XS is composed of biophysical sensors and a custom software.

At the onset of a muscle contraction, energy is released in the form of an acoustic sound. This is to say, similarly to the chord of a violin, each muscle tissue vibrates at specific frequencies and produces a sound (mechanomyogram or MMG). Being that the frequency of muscle sounds sits between 5Hz and 45Hz the MMG is not easily audible to human ear, but it is indeed a sound wave that resonates from the body.

The MMG data is quite different from locative data you can gather with accelerometers and the like; whereas the latter reports the consequence of a movement, the former directly represents the energy impulse that causes that movement. If you add to this a high sampling rate (up to 192.000Hz if your sound card supports it) and very low latency (measured at 2.3ms) you can see why the responsiveness of the XS can be highly expressive.

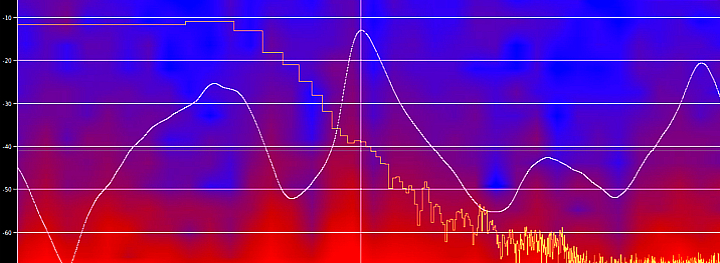

The XS sensors capture the low-frequency acoustic vibrations produced by a performer’s body and send them to the computer as an audio input. The XS software analyzes the MMG in order to extract the characteristics of the movements, such as dynamics of a single gesture, maximum amplitude of a series of gestures in time, etc.

These are fed to some algorithms that produce the control data (12 discrete and continuous variables for each sensor) to drive the sound processing of the original MMG.

Eventually, the system plays back both the raw muscle sounds (slightly transposed to become better audible, say about 50/60Hz) and the processed muscle sounds.

I called this model of performance biophysical music, in contrast with biomusic, which is based on the electrical impulses of muscles and brainwaves.

Musical applications

From the live sampling of the muscle sounds, through the playback and manipulation of pre-recorded sounds, to the real time processing of traditional musical instruments, the XS is the first musical instrument of its kind to offer such a flexibility at a very low cost and with a free and open technology.

The XS can be played as a traditional musical instrument: acoustic sounds can be produced and modified by contracting the muscles. The XS can also be used as a gestural controller to control audio synthesis or sample processing. The XS can be used in both modes simultaneously, and the data stream can be sent to other software o hardware via MIDI and OSC.

The most interesting performance feature of such system consists of the possibility to expressively control a multi-layered processing of the MMG sounds by simply exerting diverse amounts of kinetic energy. For instance, stronger and wider gestures could be mapped so to generate sharp, higher resonating frequencies coupled with a very short reverb time, whereas weaker and more confined gestures could be deployed to produce gentle, lower resonances with longer reverb time.

The form and color of the sonic outcome is continuously shaped in real time with a very low latency (measured at 2.5ms), thus the interaction among the perceived sonic force and spatiality of the gesture is neat, transparent and fully expressive.

Other applications

Muscle sounds represent a very accurate control data; their signal-to-noise ratio is higher than many other sensors signals. This means that with a bit of imagination you can use muscle sounds to train people suffering of muscle disease, control prosthetic hands, control live videos or lights, control your music player, turn on your washing machine, make your robot walk, and any other fancy hack you can imagine.

If you are intrigued and want to realize a cool hack or use the XS for your next project but you are not sure how, please get in touch with me.

Framework

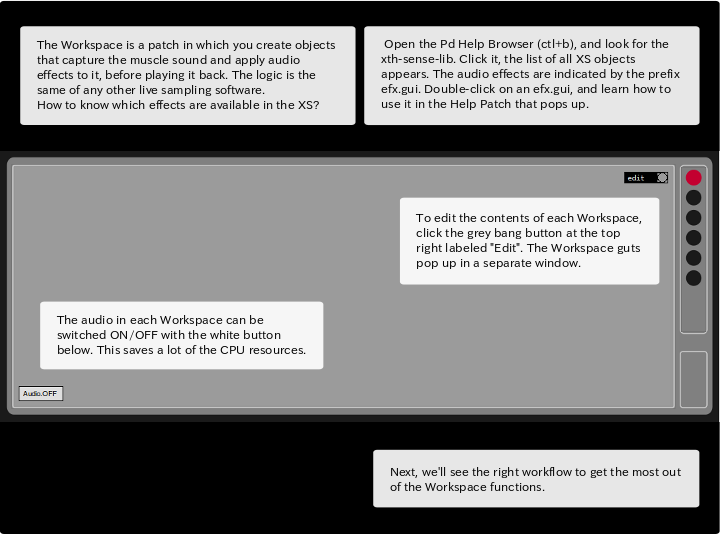

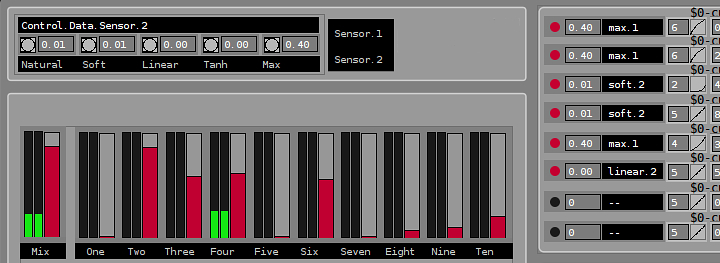

The XS software is a complete digital framework to work with muscle sounds (mechanomyogram or MMG). The software is a large and multi-layered Pure Data patch. This means that you can use the usual features of Pure Data aka Pd, plus the specific functions of the XS. An XS patch can be saved as a .pd file. The software offers an intuitive Graphical User Interface (GUI).

However, before getting your hands on the XS, I recommend to get familiar with the basics of Pd (adding an object to a patch, making connections, etc.) by using this tutorial.

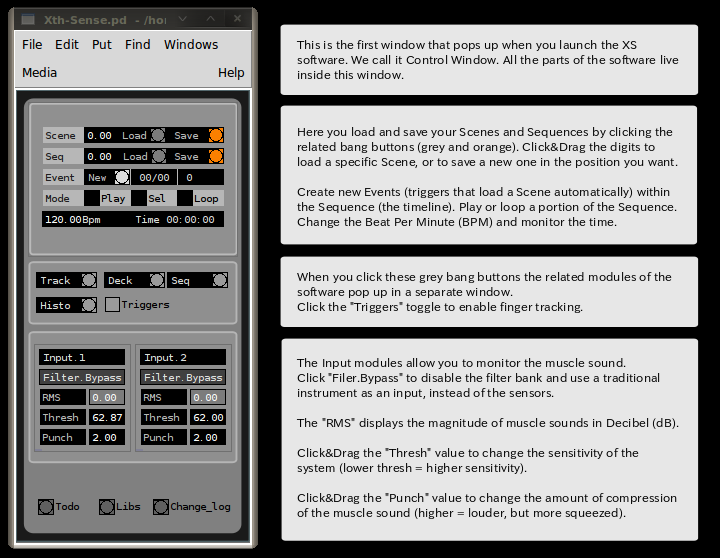

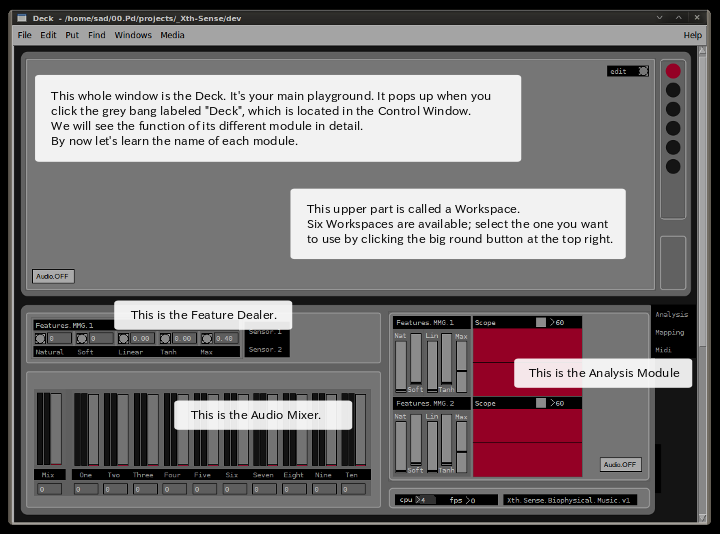

The software receives the audio input from the XS sensors and operates 3 different functions: data analysis, features extraction and Digital Signal Processing (DSP). The XS receives the muscle sounds produced by your body; it extracts 12 features; allows you to connect these features to audio effect parameters, which you control to process the muscle sounds into music.

When you first open the XS software a small rectangular window with a GUI comes up. The whole XS software is included here. We call this the Control window. Here you can save and load your global presets, monitor the sensor inputs, as well as pop up other modules of the software.

By clicking the related buttons the two main modules pop up: the Deck and the Sequence.

The Deck is the main playground. Here you can create Digital Signal Processing (DSP) chains (arrays of audio effect and other Pd objects) to live sample the muscle sound, monitor the features that are being extracted, and set mapping definitions to control the software. All the parameters that are visible in the Deck can be saved into a global preset, which is called a Scene. You can save up to 20 scenes.

The Sequence module allows the computer to manage the structure in time of your performance or musical piece. By clicking the bang labeled “New”, you can add an Event: this is basically a trigger that tells the computer to load a Scene at a specific point in time. To specify which Scene you want to be loaded, simply click on the black area of the Event object you just created; in the smaller window that pops up you can set the Scene to be loaded, by clicking and dragging the number box.

Troubleshooting

Before starting, it can save you a lot of headaches to make sure that:

a) the XS sensor is properly connected to your sound card or computer;

b) the battery in the XS sensor box is fully charged;

c) the buttons labeled “Audio.OFF” that you find in the Analysis module and in each Workspace are ON.

There is no sound coming out of the XS software and nothing happens.

Make sure that the Pd audio engine is ON (keyboard shortcut is ctl+.). You should hear sound now, if not find the Media tab, then click Test Audio and MIDI. There you can activate a tone generator to test the audio. If you hear the high pitched tone the audio engine in Pd is active. This means something is wrong within your XS patch. Check that the buttons labeled “Audio.OFF” that you find in the Analysis module and in each Workspace are ON. Double check that you connected properly the chords among the objects you created in the Workspace.

I see number boxes and sliders moving in the XS, but I can’t hear any sound.

The Pd audio engine in this case is active. Check that the buttons labeled “Audio.OFF” that you find in the Analysis module and in each Workspace are ON. If so, there’s something wrong with the way you connected the objects in the Workspace. Double check and make proper connections. Still no sound? Have you increased the volume of the channels you are using by sliding up the faders in the Mixer?

I try to set a mapping definition, but the Router keeps reporting the same parameter, but not the one I chose.

This happens because when the Pd audio engine is ON the control values are continuously active. Solution: simply switch the Pd audio OFF while you set your mapping definitions. When you are done, you can switch the Pd audio ON again.

The Xth Sense software and all the code it depends on is free software. It is a complete, interactive environment for the performance of biophysical music (real time music generated by biological and physical processes of the body).

The software is written in Pure Data aka Pd, and runs on Linux and Mac OS X. With a bit of hacking it can run on Windows too.

The software depends on the xth-sense-lib. This is a large collection of Pd objects designed to capture, analyze, process and playback muscle sounds. In addition, you will need the libraries iemguts (by IOhannes Zmoelnig) and soundhack (by Tom Erbe), which are linked below.

Remember to download the Step by step tutorial, and check the Get Started section of this website (click the tab above).

Downloads

Xth Sense software

- Linux 32bit and 64bit

- Mac OS X (10.6 or newer)

- Binary files for Linux 32bit (tested on Ubuntu Lucid and newer)

- Binary files for Mac OS X (tested on 10.6 or newer)

xth-sense-lib

iemguts and soundhack additional Pd libraries.

These binaries have been tested on the indicated operating system (OS). Choose yours below.

If your OS is not listed, you will need to download the source code and compile the libraries on your machine.

or

Xth Sense, biophysical music and responsive environments.

Copyright (C) 2011 Marco Donnarumma

This program is free software: you can redistribute it and/or modify it under the terms of the GNU General Public License as published by the Free Software Foundation, either version 3 of the License, or (at your option) any later version.

This program is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU General Public License for more details.

So why am I doing this for free?

Because we need to believe we do not need major corporations or closed devices to create innovation.

The biotechnologies commercially available and truly usable are closed (you can not modify it) and expensive (you have to pay a good amount of money for it). Fortunately, the Do It Yourself (DIY) movement is getting increasingly stronger in the re-appropriation of technologies.

The Xth Sense is not only a tool, it is an aesthetic. Its goal is to spread the belief that we own our body and we are all capable of work and play with hi-end biotechnologies. No need to wait for a corporate research team to come up with something “new”.

The downside is that I am developing this project on my own, and although I try my best to deliver a good and functional system, I might well use some help. If you wish to contribute in any way to the Xth Sense project, please do get in touch with me, and we will get it from there!

This website, the tutorial, the videos, the images and everything related to the Xth Sense is created with a Linux operating system and exclusively free and open software.

Biophysical music is a term coined by the Xth Sense creator and performer, Marco Donnarumma, to define music that is a joint result of bioacoustic and physical body mechanisms. It is different from Biomusic, another kind of musical practice based on bioelectric signals to drive computer-generated sounds.

The central principle underpinning the Xth Sense (XS) is not to “interface” the human body to an interactive system, but rather to approach the former as an actual and complete musical instrument.

Below you can view a live recording of Music for Flesh II, for the Xth Sense, at The University of Edinburgh, UK, March 2011.

Music for Flesh II | Interactive music performance for enhanced body (Xth Sense) from Marco Donnarumma on Vimeo.

Augmented musical instruments and physical computing techniques are generally based on the relation user>controller>system: the performer interact with a control interface (a physical controller or sensor systems) and modify results and/or rules of a computing system. Sometimes this approach can confine, and perhaps drive, the kinetic expression of a performer, leaving less room for her physical energy and non-verbal communication. Besides, being that often the sonic outcome of such performances is digitally synthesised, the overall performance can lack of liveness.

The XS completely transcends the paradigm of the user interface by capturing sounds and control data directly from the performer’s body. There is no mediation between body movements and music because the raw sound material originates within the fibres of the body, and the sound manipulations are driven by the vibrations of the performer’s muscle tissue.

Technical description

The XS fosters a new and authentic interaction between man and machines.

By enabling a computer to sense and interact with the biosonic potential of muscle tissues, the XS approaches the biological body as a means for computational artistry. During a performance muscle movements and blood flow produce subcutaneous mechanical oscillations, which are nothing but low frequency sound waves (mechanomyogram or MMG). Two microphone sensors capture the sounds created by the performer’s limbs and send it to a computer. This develops an understanding of the performer’s kinetic behaviour by listening to the friction of her flesh. Specific gesture, force levels and patterns are identified in real time by the computer; then, according to this information, it manipulates algorithmically the sound of the flesh and diffuses it through a variety of multi-channel sound systems.

The neural and biological signals that drive the performer’s actions become analogous expressive matter, for they emerge as a tangible sound experience.

Support and awards

The work was developed at the SLE, Sound Lab Edinburgh – the audio research group at The University of Edinburgh, and was kindly supported by the Edinburgh Hacklab and Dorkbot ALBA. The project was finalized during an Artistic Development Residency at Inspace, Edinburgh. Inspace kindly sponsored the work by providing technical and logistical support, and organizing a public vernissage for the official launch of the project within the artistic research program “Non-Bio Boom”.

The XS technology was awarded the first prize at the Margaret Guthman Musical Instrument Competition (Georgia Tech Center for Music Technology, US, 2012) as the “world’s most innovative new musical instrument”.

The Scottish Arts Council, Creative Scotland, has awarded a grant in support of my participation to the Korean Electro Acoustic Community 2011 conference in Seoul, South Korea. The research was endowed twice with a PRE travel grant by the University of Edinburgh.

Additional Information

The use of open source technologies is an integral aspect of the research. The biosensing wearable device was designed and implemented by Marco Donnarumma, with the support of Andrea Donnarumma and Marianna Cozzolino. The Pure Data-based framework for real time analysis and processing of biological sounds was designed and coded by the author on a Linux machine, with inspiring advice by Martin Parker, Sean Williams, Owen Green Jaime Oliver, and Andy Farnell.

Related works

Hypo Chrysos

Action art for vexed body and biophysical media by Marco Donnarumma.

Into the Flesh

A musical piece for Xth Sense, trombone and double bass by Shiori Usui

Music for Flesh II

Interactive music performance for enhanced body by Marco Donnarumma

Pictures courtesy of Chris Scott.